Ray Caster for the Oculus Rift

Brian Fischer(bfischer)

Summary

The goal of this project is to develop for use with the Oculus Rift.

Background

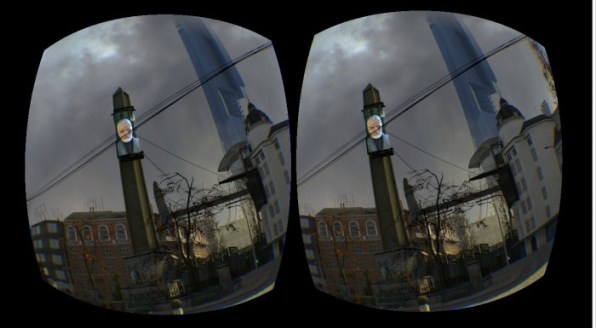

The Oculus Rift redenrs 2 images, one image for each eye. The rendered images, are not standard images, but instead images that are distorted. When these images are displayed on the headset, they appear as normal images to the user. The goal of this project is to directly render and display the distored images, rather than rendering an image and then distorting it. This results in extra work, and rendedered data from the originally rendered image that is unused. Below is an example of the kind of images the Raycaster would render

Challenges

The largest challenge of this project will be understanding how image distortion works for the Oculus Rift and how to directly render the distored image. Additionally, understanding I hope to be able to understand how the requirements for rendering a convincing scene on the Oculus might differ from rendering a standard image.

Resources

There are a number of resources available online with information about how to use and develop on the Oculus Rift. Additionally, the Oculus Rift SDK comes with source code for a number of sample scenes. These will serve as a useful starting point for understanding the rendering the rendering process on the Oculus Rift.

Goals/Deliverables

The main deliverable of this project is a Ray Caster that will render distored images for the Oculus Rift. Additional goals for this project include analysis on what kinds of images and textures do not render well to the Oculus Rift and what can be done to improve image quality when those kinds of objects are present.

Schedule

Oct 30 - Nov 7: Develop/Find an interactive Raycaster to use for the project. Complete Oculus Rift tutorials/understand the sample code base included with the SDK.

Nov 8 - Nov 14(Checkpoint 1): Develop a model for the distorted Oculus Rift images. Determine how to modify the Raycaster in order to render an image based on this model.

Nov 15 - Nov 21: Modify the Raycaster in order to render distored images for the Oculus Rift.

Nov 22 - Dec 1(Checkpoint 2): Collect Data on Raycaster. Improve efficieny/performance of the Raycaster. Figure out problem images that don't display well and figure out how to improve them.

Dec 2 - Dec 12: Work on Final Paper, catch up if have fallen behind schedule, analyze results.

Projct Questions

What is the distortion function used to transform rendered images into the image displayed on the Oculus Rift?

How can this function be applied to the rays so that we can render an image as if the distortion function was applied to it?

What is the performance of rendering a scene this way? How does it compare to using the Oculus's shader for distortion?

Bonus Questions

What kind of images render poorly on the oculus rift, what causes the issues, and how can we fix them?

How can we address refraction at the bottom of images when using the Oculus headset?

Datasets

TBD